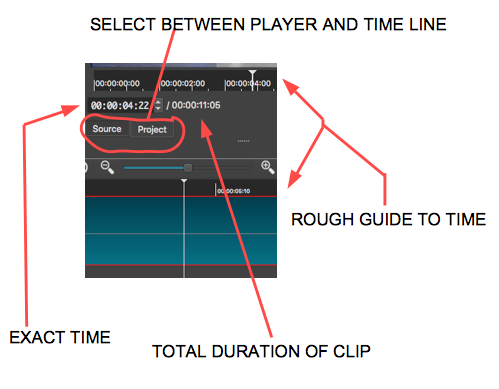

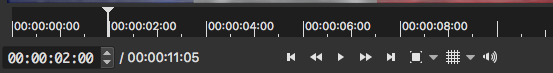

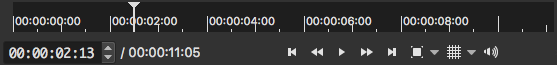

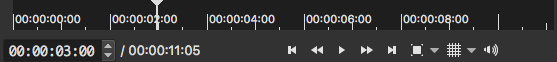

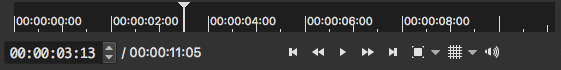

See, that “roughly” is the error. Scale ticks are supposed to be “on the value”, not anywhere in between. I don’t need a tick that is “somewhere”. I can get “somewhere” myself by clicking anywhere. It’s like using non-rectangular sheets of paper for a letter, because who the heck needs rectangles when you actually write your text somewhere else anyway? Or why use regular intervals? The app might as well throw in timeline ticks at random intervals without being any less predictable or helpful. I get the impression that you never really used a real working scale, or have understood how to use it.

And when it rains you may get wet. This is obvious. Yet, you cannot compare a scale with dynamic ticks with a real physical thing. When you “zoom” a real thing, it won’t change. You just see a different part of the same thing. A scale can change. And it should. This one actually does, but in a very poor way.

It’s the timeline’s job to show the time, not to “look clean” by being empty! That’s Apple design logic, remove everything useful so that it’s pretty. I’m sorry but the more I read from your arguments, the more it sounds completely stupid to me.

And you seem to believe that I am stupid and don’t have the slightest idea of what I’m doing here. Rest assured that I am fully aware of the concept of time and its various digital representations, as well as the concept of finite discrete data, be it audio samples or image pixels. I’ve been editing with a timeline (or pixel ruler) and zoom levels for three decades or so.

Your comparisons simply don’t apply. You argue that the timescale should more or less use the same absolute interval no matter the zoom level. This is nonsense. (And even just my second point of criticism, not even the main one.) A timescale selects the ticks by evaluating the available screen space and the amount of it that a tick label takes. It then adds some margin to keep it readable and aligns the actual ticks so that a human brain can easily consume and navigate them. It’s supposed to provide orientation, not to confuse with noise. For most people in western Europe that would be the decimal scale. And that’s exactly what everybody else in this world does (as far as I’ve seen it). Would you dare to state that every other developer of timescales or axes in general is wrong? After all, being wrong is often a matter of your point of view. To you, everybody else is wrong. To everybody else, you are wrong. Who’s right?

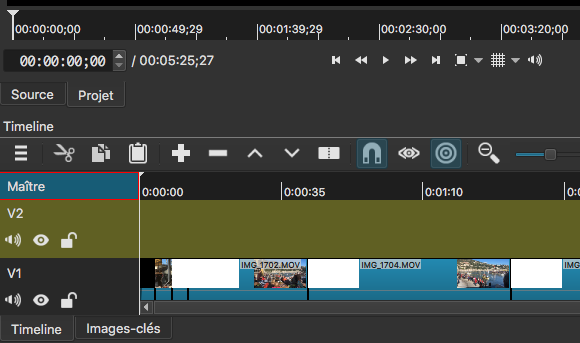

Wow, so this is “by design”. I’d say a particularly bad design. And “to improve timeline rendering performance” – are the Shotcut developer that bad at their job so they can’t compute proper time ticks sufficiently efficient? I hadn’t expected that, really! (Because until now, everybody could.)

If you do everything with your keyboard and leave the mouse untouched, if you know all the key shortcuts, then your point seems plausible. I, however, prefer a pointing device of some kind for all tasks that deal with graphical interaction.

I’ve spent some time now digging through the source code of Shotcut and MLT on GitHub but wasn’t able to locate the code that performs this time ticks selection. The closest interesting part was this. Maybe somebody could point me to the right place so I could analyse it and suggest an improvement, if one is desired at all.