Are there any plans to add some form of network rendering to use one(or more) systems for the rendering of a project?

there is a streaming feature, havnt tried it, might be something, other than that there is qmelt cli

but nothing like you asking, dunno if someone made a web ui for qmelt yet

I see there’s a way to do it with the melt cli that kdenlive uses, is there a functional difference between qmelt(what shotcut uses) and melt(what kdenlive uses) since they both seem to be based on the same mlt framework?

dunno but shotcut also uses melt heh, btw here is the cmd i use on my benchmark

Shotcut\qmelt.exe -progress -verbose TheFireEscape.mlt -consumer avformat:TheFireEscape.x264.mkv acodec=flac vcodec=libx264 preset=slow crf=16

I wonder what qmelt is then, it’s the encoder process I see called natively. it looks like an easy way to build a render farm has been requested in the kdenlive forums as well but to no effect.

yeah, you can use a network share to store source files and project files for example

and then drop it into a folder and automatically run it thru qmelt/melt to encode the project

having a simple bootstraped based interface would been cool to show status of it, i have no proper coding skills at all, i know simple html and python/bash/batch heh

So that would be cool for a network render, I can’t imaigne it would be too hard to autogenerate the scripts for an “offboard render” where shotcut uploads everything to a network server which processes it and sends a message when it’s done. That said a cluster would be more useful(at least for me) where I could break it up across 8 systems(we have old poweredge blade servers, they’re not fast on their own but with 8 cores each they’re faster than any of the new i3/i5 laptops for stuff that chunks up well)

Ok, I did some tinkering, as long as the source files and mlt are in a single folder they’re portable(which is good, I was worried about absolute path’s) now I just need to write a folder monitor that will process the mlt

So I found there’s a package inotify_tools available for ubuntu, I think I can use it to write a script to accomplish this, has anyone used it before?

here is something i think can work (kinda brainstorming on the spot now)

keep all the source files and mlt files in the same location something like //server/projects/norsesaga.mlt

have the interface for the web encoder on the server, use shotcut to edit over the network via the samba share

this might require 10GBe NICs if the source is really high bitrate or 4k, unless shotcut can cache the files in lower quality locally

- Edit with Shotcut with cache feature (useful for both over the network or local editing)

- Encoding Web Server, select the project from a list generated from the mlt files in //server/projects

- Encoding Web Server, select quality settings and name

- Execute the above settings on server with qmelt cli

- Output the encoded files to //server/encoded

let me try writing a super simple batch or bash script and see if samba share works for encoding with qmelt tomorrow

How did it turn out?

One more bump to add to this saga, I just noticed adobe’s media encoder has an “auto encode watch folders” I wonder how they implemented it.

May I bump this up too?

In my case I actually work on slower laptops, those are enough for my not so power hungry tasks. But I have access to a powerful PC another location in house, I would be happy to send the rendering job (encoding h.264) to. It could be offlone (transfer on an USB drive) or on local network (trough gigabit ethernet) but I still cant find the easy way to do it.

If you keep everything in the same folder the project is portable which i’ve done before between my laptop and desktop. Since shotcut runs on windows/mac/linux I’ve been able to render using a linux based server as well. What I haven’t been able to do is spread things out across multiple nodes the way you can with a scene in something like blender but that’s a separate issue.

Right now I move projects around on one of these https://amzn.to/31HXzGB

Although if I was going to get something specifically for it I’d probably mix this https://amzn.to/2Z52lMA and a ssd like this https://amzn.to/2TBqsS2

Thank You for clarifiying this!

I need to do research in one more aspect that may be related to other methods to.

@D_S Do You think a faster drive would tune up exporting performance for You?

Is the fastest drive really necessary when You just export video? You do not need fast seeking performance, etc. Not jumping on the timeline. Just reading and writing. I mean a non ssd drive can be read and written in 80-90MBps (sequential read).

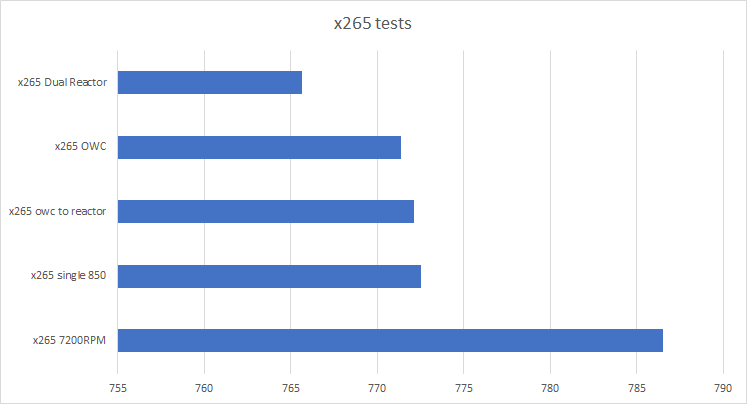

That depends on a lot of factors but the general answer is a faster drive helps up to a point. Below is some testing i’ve done with a few ssd’s and a spinner, the best results I got were on a SATA ssd raid 0(because that pcie ssd wasn’t very fast) but I was also using extremely fast cpu’s(dual xeon x5690) I could run a similar test on something like an i5-7600k but the general rule would still hold.

All that said depending on how long you plan to wait before picking up your completed file it may be moot. If you aren’t planning to pick your video up until the following day the difference between 5 minutes and 5 hours isn’t visible at all.

Hi there,

is there anything new on this subject? The computer I’m using with ShotCut is good enough to edit a video, but it then becomes unusable when I produce the result video… And I have a few computers doing not much that could handle the production of the result video…

So, is there a command line I can grab somewhere to just launch the video production on another machine with the same files? I’d like to connect via ssh, without using a GUI.

Thanks.

I did find a way to do it here Does shotcut need a disply but it’s been a while(that was 2016) so it likely will need some tweaks

Emne: ![]() EARLY ACCESS: Cluster Rendering for Shotcut (v0.8.0.0) – Render on your RPi or spare computers!

EARLY ACCESS: Cluster Rendering for Shotcut (v0.8.0.0) – Render on your RPi or spare computers!

Hi everyone!

I’m excited to share a Proof of Concept project that makes distributed video rendering possible for Shotcut users.

This project, ClusterProcessing for Shotcut, lets you split a complex Shotcut project and render the different parts simultaneously across a network of computers (like a Raspberry Pi cluster or any spare PC).

What is it?

-

It reads your Shotcut

.mltproject file. -

It automatically distributes the rendering tasks to your connected computers.

-

It securely collects the finished clips and combines them into one final video.

Current Status (v0.8.0.0)

-

Result: You will see about 10–20% improvement in rendering time right now.

-

Security: Setup is simple and secure, using SSH key-based authentication (no insecure OS auto-login required!).

-

Limitation: It currently only supports basic cuts and single-track projects. Advanced filters/effects are not yet tested or supported.

I Need Your Help!

The biggest challenge is making the distribution intelligent. Right now, it distributes the workload evenly. To get major speed gains, we need to:

-

Measure network speed and CPU power of each machine.

-

Assign tasks dynamically (faster machines get more work).

I welcome anyone interested in testing, providing feedback, or helping with the code!

(P.S. The core code was generated by Google Gemini AI based on my functional design!)

![]() Link to the Code & Documentation (GitHub) GitHub - UbuntuRikard/Make_ClusterProcessing_for_Shotcut

Link to the Code & Documentation (GitHub) GitHub - UbuntuRikard/Make_ClusterProcessing_for_Shotcut