Hello there

Am trying to normalize audio to -0.4dB? but cant seem to figure out how, everything is in LUFS or to high dB or to low dB

Hello there

Am trying to normalize audio to -0.4dB? but cant seem to figure out how, everything is in LUFS or to high dB or to low dB

dB is a relative scale. You want to normalize audio to -0.4dB relative to what? The original source?

The LUFS scale is an absolute scale. It stands for Loudness Units Full Scale. One loudness unit is roughly equivalent to one dB. So it is easy to think of LUFS as dBFS (dB Full Scale).

In most situations, -23 LUFS is a good level to normalize to.

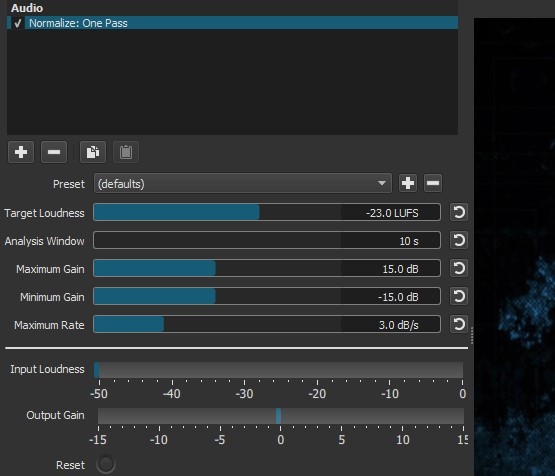

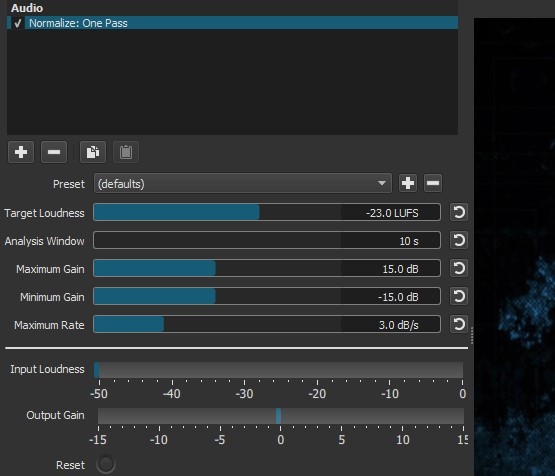

If you want to normalize to a different level. just change the Target Loudness to something else.

The minimum and maximum gain dictate the most that the filter will increase or decrease the level of the original source.

What is the difference between a “Loudness Unit” and dB (decibel)? You say they are “roughly” equivalent. What if I wanted to normalize to, say, exactly -3dBFS?

I’ve been puzzled by Shotcut’s normalizing filter, too.

Because I’ve worked with Audacity on other, audio only, projects, I’m comfortable with it’s normalizing “effect” (their term). Using Shotcut, I render only the audio, massage it with Audacity, and bring that file (.wav) back into Shotcut. The Audacity effect says “Normalize maximum amplitude to -1 db”. Whatever than means. All I care about is it gives me consistent maximum levels when using multiple audio tracks.

How does that translate into the Shotcut normalize filter?

Hi!

Is there any documentation of the Normalize filter in regards of all the settings?

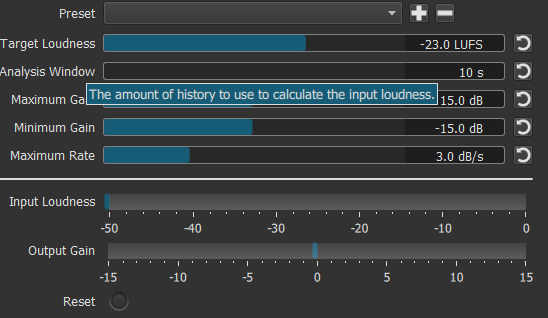

I.E. what is “Analysis Window” in seconds?

What does “Maximum Rate” do?

It would be nice to have some presets come with Shotcut, like “-1db”, …, “-3db”…etc…

PS: I love this app!

As @brian wrote, LUFS is absolute whilst other measurements are relative to something else.

The LUFS system also takes into account the slope, and hence the perceived loudness

of human hearing at different frequencies.

The LUFS system was introduced for broadcasting (normally at -23) but platforms like Youtube, Spotify, Apple Music, etc have also adopted it to stop the loudness war madness.

Their target level varies between -16 and -14.

The EBU R128 (LUFS) system is explained in the very lengthy document:

The Audacity way of normalizing is out dated and still based on RMS.

Furthermore, be very careful as Audacity does not send the correct audio signal levels

to plugins, there is an added gain of around 4-6 dB.

This then creates wrong results.

Even the built-in Nyquist (Lisp) language based plugins often return nonsense and wrong values.

Audacity is quick and easy to work with, but not for accurate work.

There are better and more accurate free audio editors like Ocenaudio.

I have done extensive tests with the LUFS filter included in SC and can tell you it’s spot on.

The results always agree with a professional metering system and the audio I have normalized

using SC has never failed QC at our station.

It’s worth the time and effort in learning the LUFS system as it is the norm these days.

Did you notice that when you mouse over the text in the UI a tool tip pops up with some extra information?

There are some other posts in the forum history that are easy to find by searching:

I am going to close this very old thread. Have a look at the tool tips, read some older posts and then feel free to start a new thread with a more specific question.