Hello,

Edited several small video clips. (1280 x 720 mp4, 12 min. each)

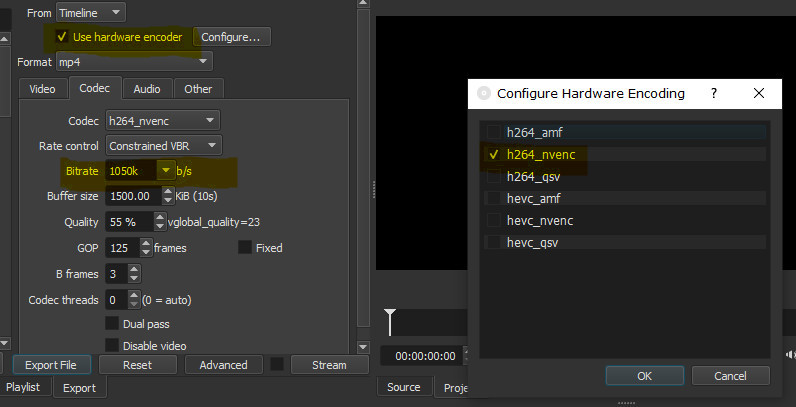

Configured the program to use hardware encoding, set bitrate to 1050 kb/s.

The finished videos were only 589 kb/s…

Attached configuration screenshot.

Program version 19.12.16

Graphics card Nvidia GT-710 (latest driver)

OS win 10 1809 x 64.

What went wrong ?

Answers will be appreciated.

Thanks

Motim

As far as I can tell there is nothing wrong with it. You are using constrained VBR, which is not the same as Average Bitrate. It means that it will not use all of the bitrate. Rather, the bitrate is a maximum in some short measuring period. The “V” in “VBR” means variable. The value you reported from some tool is the average bitrate over the entire duration of the video.

Hello,

Thanks for the answer.

Adjusted settings as per your explanations, encoded videos, results satisfactory.

Thanks again.

Motim

This topic was automatically closed after 90 days. New replies are no longer allowed.