All of my clips are in a constant 60fps. I render my project in a constant 60fps. I am not using Hardware Encoding. My audio and video are on separate tracks. I read that the audio gets shifted 6 frames ahead but I couldn’t find what exactly it is that triggers that and there’s no way in hell that me - a human - can notice when a 6 frame desync has occurred. It’s accumulative, so when rendered/exported, the audio starts out in sync but by the end it’s roughly a full second earlier than it should be. I cannot manually notice a 6 frame shift when it occurs whilst watching the exported video, and within Shotcut itself, it’s all perfectly synced up. I’m ready to explode. Please, someone… anyone… help me

Did you read this information here?

What are the properties of the audio?

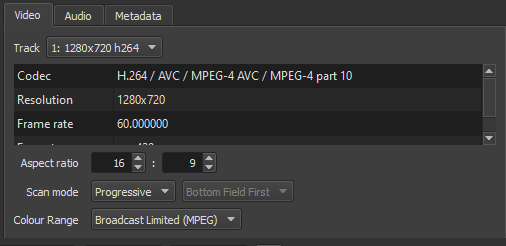

What are the properties of the video?

Are the video and audio from different sources (recording devices or cameras)?

Yes, I read the 6 frame thing while trying to find a solution for the issue.

The audio and video are from different sources, yes.

The video consists of .png images (multiple sources) and .mp4 videos (recorded with OBS).

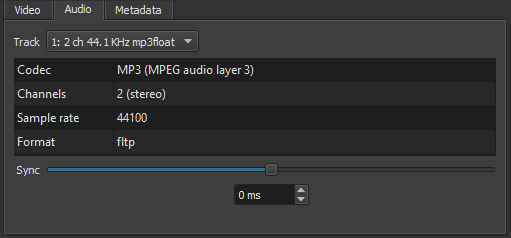

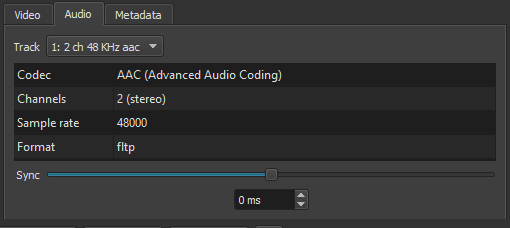

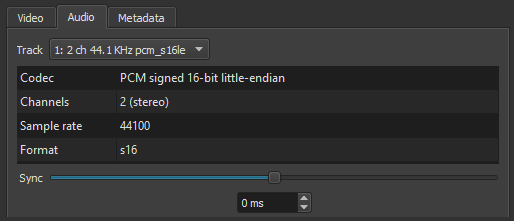

The audio consists of multiple different sources. A mixture of .WAV and .mp3 files, some from audacity, others from unknown sources (downloaded from the internet).

Video:

(Format: yuv420p)

Audio (Type 1):

Audio (Type 2):

(Taken from a video clip)

Audio (Type 3):

(.wav)

and the final audio type is the same as that but it’s mono, not stereo

The audios have different sampling frequencies, I don’t know if the audio at 44.1 Khz can affect the synchronization in any way. I read about this in other forums and I think it had to do with the fact that for video it is resampled for export at 48 kHz.

I do not usually combine audio files of different sampling frequencies to avoid problems.

If necessary I do a conversion with resampling.

I don’t know if this can help you in any way.

Sample rate 44100 vs 4800? (reduser.net)

This topic was automatically closed after 90 days. New replies are no longer allowed.