The “out” offset was considered and written in code. I gave you a quick example to easy to read and understand. The actual time calculation and conversion in my code were much more complicated.

Actually, in the earlier version, if I create a project using 1000fps, the subtitle mlt can be accurate to each millisecond, in and out.

The difficult part is, for a video mode eg 23.98fps, each frame is 0.04170141784820684 seconds, after a minute, you don’t know where the out is.

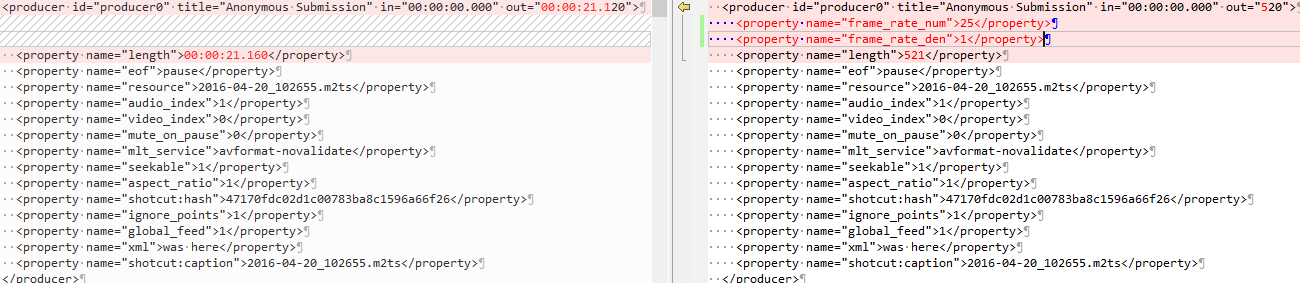

That’s where I find it strange after dozens of tests: eg, a frame is 66.66666666…milliseconds, sometimes Shotcut uses 66.6666, sometimes it uses 66.6667, but it definitely not use 66.66666 nor 2/3.

This is incorrect. In .mlt file, timeline is defined by “playlist”, normally it will looks like:

In: 0.000 Out: 0.960

In: 0.000 Out: 0.960

In: 0.000 Out: 0.960

If something like: “In: 1.000 Out: 1.960” is used, meaning you are using the source video between time 1.000 to 1.096 second.

But now you see where the drift is from. If the timeline is “In: 0.000 Out: 0.961” by wrong calculation, or user simply changed the framerate, the total timeline will drift by a lot.